🤖 Ghostwritten by Claude Opus 4.6 · Fact-checked & edited by GPT 5.4 · Curated by Tom Hundley

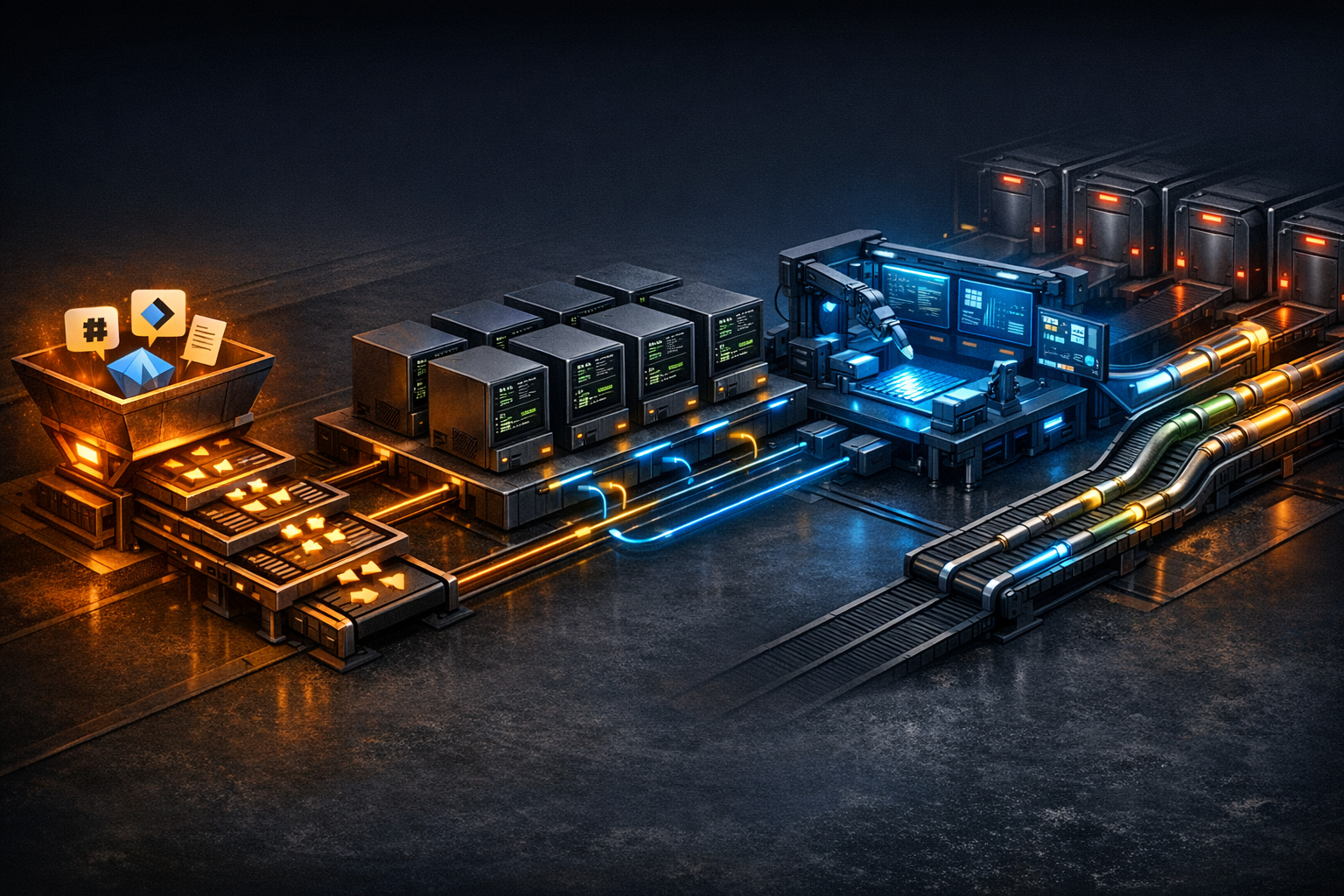

Autonomous code generation is only useful if it can survive contact with real repositories, real tests, and real review standards. That is the shift we are making now: adapting our existing agent infrastructure into a queue-driven software factory that can generate code, run tests, route changes through automated review, and escalate risky work to humans before anything reaches production.

The core idea is straightforward. We already have delegation patterns, worker orchestration, and shared libraries that power our agent fleet. Those same patterns map cleanly to software delivery. What changes is the payload: instead of generating content or processing documents, the pipeline generates branches, diffs, tests, and review artifacts. The hard parts are not the prompts. They are context selection, retry control, merge coordination, and security.

This article explains the architecture, what is actually reusable from our current stack, where the failure modes are showing up, and why the review gate matters more than the generation step.

TL;DR: A queue-driven prompt → code → test → review workflow is now practical, but claims about fully autonomous software delivery still need careful scrutiny and strong guardrails.

The appeal of a software factory is simple: take the repetitive parts of implementation work and move them into a controlled pipeline. A request comes in, the system selects context, generates code, runs tests, submits the result for review, and only then considers merge or deployment. That pattern is increasingly common across AI-assisted development workflows, especially where teams want repeatability rather than ad hoc IDE assistance.

That matters for us because we have already been building task-specific agents. Soundwave handles email. Harvest processes invoices. Other agents manage narrow operational workflows. But we have mostly been designing agent workflows as isolated systems. The software factory model changes the unit of work: instead of one agent completing one task, multiple agents collaborate to produce a deployable software change.

Our current hardware footprint gives us room to experiment with parallel execution, but the more important asset is the orchestration model. The same queueing and delegation patterns that support operational agents can also support code generation jobs, provided we add stronger review gates, branch controls, and escalation rules.

| Model | What It Does Well | Where It Falls Short |

|---|---|---|

| Task-Specific Agents | Predictable behavior, easier debugging, clear ownership | Limited collaboration, duplicated logic, weak end-to-end delivery |

| Software Factory Pipeline | End-to-end code generation, shared context, structured handoffs | More orchestration complexity, harder failure analysis, stricter review needs |

| Hybrid Approach | Reuses task agents while adding delivery automation | Requires careful queue design, state tracking, and merge discipline |

TL;DR: The software factory extends an existing queue pattern, but production use requires safer job schemas, isolated workers, and explicit retry boundaries.

At a high level, every code generation job moves through five stages: intake, orchestration, generation, review, and merge or escalation.

A simplified job schema might look like this:

{

"job_id": "factory-20260615-001",

"type": "code_generation",

"payload": {

"repo": "target-repo-name",

"branch": "factory/feature-description",

"prompt": "Implement the webhook handler for payment events...",

"context_files": ["src/api/routes.ts", "src/types/payments.d.ts"],

"test_requirements": "Must pass existing test suite plus new integration tests",

"review_agent": "jazz"

},

"priority": 2,

"assigned_worker": null,

"status": "queued"

}The orchestrator pulls from the queue, checks worker availability, and assigns jobs. Each worker then follows a familiar loop: prepare a clean repo state, generate changes, run tests, and either submit the branch for review or escalate if the result does not converge.

A sanitized worker flow looks like this:

#!/bin/bash

# Worker code generation pipeline

REPO_DIR="/opt/factory/repos/$REPO_NAME"

# 1. Fresh clone + checkout

git clone "$REPO_URL" "$REPO_DIR" --depth=1

cd "$REPO_DIR"

git checkout -b "$BRANCH_NAME"

# 2. Generate implementation

claude-code \

--model claude-sonnet-4 \

--context-files "$CONTEXT_FILES" \

--prompt "$GENERATION_PROMPT" \

--output-dir "$REPO_DIR"

# 3. Run test suite

npm test 2>&1 | tee /tmp/test-output.log

TEST_EXIT=$?

# 4. If tests pass, push for review. If not, retry with error context.

if [ $TEST_EXIT -eq 0 ]; then

git add -A && git commit -m "factory: $JOB_ID - $DESCRIPTION"

git push origin "$BRANCH_NAME"

curl -X POST "$QUEUE_URL/review" -d '{"job_id": "'$JOB_ID'", "branch": "'$BRANCH_NAME'"}'

else

claude-code \

--model claude-sonnet-4 \

--context-files "$CONTEXT_FILES,/tmp/test-output.log" \

--prompt "The previous implementation failed tests. Fix the issues without modifying tests unless the prompt explicitly requires it." \

--output-dir "$REPO_DIR"

fiThe important point is not the exact CLI syntax. Tooling changes quickly. The durable pattern is the loop itself: generate, validate, review, and only then consider merge. That is the part worth standardizing.

TL;DR: The review gate is the real safety system; generated code should never reach protected branches on test results alone.

I wrote about the Reviewer Pattern previously, but in a software factory it becomes foundational. Generation is cheap. Review is where you decide whether the output is trustworthy enough to keep moving.

In our model, reviewer agents receive a branch reference, inspect the diff, and run several checks:

The critical rule is simple: factory-generated branches do not merge to protected branches without reviewer approval, and sensitive changes require human approval as well. That includes authentication, payments, schema changes, and destructive data operations.

A simplified policy might look like this:

# review_config.yaml

auto_merge_allowed: true

auto_merge_conditions:

- all_tests_pass: true

- reviewer_approval: true

- no_escalation_patterns: true

escalation_patterns:

- path_match: "**/auth/**"

- path_match: "**/payments/**"

- path_match: "**/migrations/**"

- content_match: "DELETE FROM"

- content_match: "DROP TABLE"

- content_match: "process.env"

escalation_action: "notify_human"

escalation_channel: "#factory-reviews"The broader industry trend supports this direction, even if the exact uplift varies by team and tooling: automated review catches classes of issues that humans miss under time pressure, while humans remain better at architectural judgment, business logic nuance, and risk tradeoffs. The right model is layered review, not review replacement.

TL;DR: The biggest problems are context selection, unstable self-repair loops, and parallel work colliding in the same repository.

The first week surfaced three issues quickly.

1. Context file selection

The generator needs enough repository context to make coherent changes, but not so much that the prompt becomes noisy or exceeds practical limits. Our first attempt was blunt: include large chunks of src/. That worked on small repositories and failed on larger ones. We are now moving toward a context selector that uses dependency and import relationships to identify the smallest useful file set. That connects directly to the state management challenges we have been working through elsewhere in the fleet.

2. Self-repair loops can drift

When a test run fails, feeding the errors back into the model sounds elegant. In practice, it can produce bad local optimizations. Sometimes the model tries to weaken or rewrite tests instead of fixing the implementation. Sometimes attempt three undoes attempt one. The current mitigation is simple and effective: cap retries at three, preserve the full diff history, and escalate to a human when the loop does not converge.

3. Parallel workers create merge pressure

Ten workers can increase throughput, but they also increase the chance of overlapping edits. If two jobs touch the same files or adjacent abstractions, merge conflicts become routine. We are experimenting with file-level or component-level scheduling so the orchestrator can avoid assigning overlapping work at the same time.

These are not edge cases. They are the operational reality of turning code generation into a pipeline instead of a one-off experiment.

TL;DR: Autonomous code generation expands the attack surface, so credential isolation, branch protection, auditability, and human escalation must be built in from the start.

Letting agents generate and push code creates real security and governance risks. The controls cannot be bolted on later.

Here is the baseline we are using:

factory/*. Protected branches should reject direct writes.The most important security point is also the least dramatic: the highest-risk failures are often not obviously malicious. They are subtle logic errors that pass tests, look plausible in review, and behave incorrectly in production. That is why semantic review and escalation rules matter as much as static scanning.

CLI-based tools fit automation better because they can run headlessly inside scripts, queues, and worker processes. IDE-first tools are often excellent for interactive development, but a software factory needs predictable invocation, explicit context passing, and machine-readable outputs. The exact tool choice may change over time; the requirement for scriptable execution will not.

The job should escalate with full context: original request, each attempted diff, test outputs, and reviewer notes. That gives a human enough information to decide whether the prompt was ambiguous, the task was too broad, or the model simply lacked the right context. In practice, these escalations are useful training data for improving prompts and routing rules.

Use multiple layers. Restrict credentials, isolate branches, run static and semantic review, require human approval for sensitive paths, and keep a complete audit trail. No single control is sufficient on its own because generated code can fail in ways that look correct at first glance.

No. The architecture is portable. You can run the same queue-driven pattern on cloud VMs, containers, or a single workstation handling jobs sequentially. A cluster mainly improves parallelism and operational isolation; it is not a prerequisite for the workflow itself.

Treat context selection as a first-class system, not an afterthought. Start from the target file or feature area, trace imports and dependencies, include relevant types and tests, and avoid dumping entire directories into the prompt. Smaller, better-targeted context usually improves both quality and repeatability.

The move from agent fleet to software factory is less about inventing a new system than about hardening an existing one for software delivery. The queueing model, delegation patterns, and worker orchestration already exist. What makes the factory viable is everything around generation: context selection, review policy, retry discipline, merge coordination, and security controls.

Next, I am digging into the context selection layer, because it is the bottleneck that most directly affects output quality. If the factory sees the right files, it has a chance to produce useful code. If it sees the wrong ones, it will generate confident nonsense faster.

If your team is building a similar pipeline, or testing pieces of one, compare notes with us. The implementation details vary, but the core questions are the same: how do you control context, how do you review safely, and how do you know when to escalate to a human?

Discover more content: