🤖 Ghostwritten by Claude Opus 4.6 · Fact-checked & edited by GPT 5.4 · Curated by Tom Hundley

MCP 2.0 matters because it closes several gaps that limited enterprise adoption of earlier MCP implementations: standardized authorization, a more deployment-friendly remote transport, richer tool metadata, and better support for long-running workflows. If you're already running MCP servers, the practical question is not whether the protocol is interesting, but whether the new spec changes your architecture, security model, and migration plan. In most cases, it does.

This guide explains what changed from MCP 1.x, what appears solid versus still emerging, and how to approach implementation without overcommitting to claims the ecosystem has not fully proven yet. It also outlines a phased migration path: update transport, add tool annotations, integrate authorization, and then optimize batching and streaming where they add measurable value.

A note on sourcing: several claims around ratification dates, vendor commitments, and first-production deployments are too recent to verify confidently from available public reference material. Where that is the case, this article treats them as plausible but unverified rather than established fact.

TL;DR: MCP 2.0 appears to add the enterprise-oriented features teams wanted most: standardized authorization, improved remote transport, batching, richer tool metadata, and broader multimodal workflow support.

The specification delta between MCP 1.x and 2.0 is meaningful, especially for teams deploying MCP beyond local developer tooling.

| Capability | MCP 1.x | MCP 2.0 | Enterprise Impact |

|---|---|---|---|

| Authorization | No protocol-level standard | Standardized auth model described for interoperable deployments | Reduces the need for custom auth wrappers around each server |

| Transport | stdio + SSE-based remote patterns | stdio + Streamable HTTP | Simplifies remote deployment behind standard HTTP infrastructure |

| Batching | Single request/response patterns | JSON-RPC batching | Fewer round-trips for multi-tool workflows |

| Tool Metadata | Name + description only | Tool annotations such as read-only and destructive semantics | Lets clients and gateways make safer execution decisions |

| Audio | Limited or absent in earlier implementations | Audio content support | Better fit for voice and multimodal workflows |

| Completions | Limited support | Completions capability | Servers can help clients suggest argument values |

| Elicitation | Limited support | Server-initiated prompts | Servers can request clarification mid-workflow |

SSE was workable for demos and some lightweight integrations, but it created friction in enterprise environments:

Streamable HTTP is a better fit because it keeps the interaction model inside standard HTTP request/response semantics while still allowing streaming when needed. That makes it easier to reason about with existing API gateways, observability tools, and security controls.

One of the most useful additions is structured metadata about tool behavior. In earlier MCP patterns, a client often had to infer risk from a tool name and description alone. Annotations make that explicit.

{

"name": "delete_media_plan",

"description": "Permanently deletes a media plan and all associated line items",

"annotations": {

"readOnly": false,

"destructive": true,

"idempotent": true,

"openWorld": false

}

}These flags can support policies such as:

If you're building an enterprise MCP gateway, annotations are the metadata layer most likely to drive policy enforcement.

TL;DR: MCP 2.0 is designed to support standardized authorization for interoperable deployments, but teams should verify the exact required flows and metadata endpoints against the current spec before implementation.

The lack of a consistent authorization story was a major barrier to enterprise MCP adoption. Standardized auth is therefore one of the most important changes in the 2.0 era. That said, some implementation details in fast-moving protocol ecosystems can shift, so treat code examples as patterns to validate against the latest specification and SDK docs.

A common pattern is to expose authorization metadata through a well-known endpoint. The exact endpoint shape and required fields should be checked against the current MCP and OAuth documentation.

import express from "express";

const app = express();

app.get("/.well-known/oauth-authorization-server", (req, res) => {

res.json({

issuer: "https://mcp.yourcompany.com",

authorization_endpoint: "https://mcp.yourcompany.com/authorize",

token_endpoint: "https://mcp.yourcompany.com/token",

registration_endpoint: "https://mcp.yourcompany.com/register",

response_types_supported: ["code"],

grant_types_supported: ["authorization_code", "refresh_token"],

code_challenge_methods_supported: ["S256"],

token_endpoint_auth_methods_supported: ["client_secret_post"],

scopes_supported: ["mcp:read", "mcp:write", "mcp:admin"]

});

});For user-facing clients such as IDEs and chat interfaces, PKCE-based authorization code flow is the safest default pattern.

import crypto from "crypto";

function generatePKCE() {

const verifier = crypto.randomBytes(32).toString("base64url");

const challenge = crypto

.createHash("sha256")

.update(verifier)

.digest("base64url");

return { verifier, challenge };

}

async function initiateMcpAuth(serverUrl: string) {

const metadataRes = await fetch(

`${serverUrl}/.well-known/oauth-authorization-server`

);

const metadata = await metadataRes.json();

const { verifier, challenge } = generatePKCE();

const authUrl = new URL(metadata.authorization_endpoint);

authUrl.searchParams.set("response_type", "code");

authUrl.searchParams.set("client_id", process.env.MCP_CLIENT_ID!);

authUrl.searchParams.set("redirect_uri", "http://localhost:3000/callback");

authUrl.searchParams.set("scope", "mcp:read mcp:write");

authUrl.searchParams.set("code_challenge", challenge);

authUrl.searchParams.set("code_challenge_method", "S256");

authUrl.searchParams.set("state", crypto.randomBytes(16).toString("hex"));

return { authUrl: authUrl.toString(), verifier };

}Most enterprises should not create a separate identity silo for MCP. A better pattern is to place MCP-compatible authorization in front of your existing identity provider and map enterprise identity claims to MCP scopes.

That usually means:

This keeps identity centralized while making MCP servers easier to onboard.

TL;DR: Streamable HTTP is the most practical remote transport improvement in MCP 2.0, and batching can reduce latency for workflows that need multiple tool calls before reasoning.

The exact SDK APIs may evolve, but the implementation pattern is straightforward: register tools, mount a Streamable HTTP transport, and enable batching only if your handlers are concurrency-safe.

import { McpServer } from "@modelcontextprotocol/sdk/server";

import { StreamableHTTPServerTransport } from "@modelcontextprotocol/sdk/server/streamableHttp";

import express from "express";

import crypto from "crypto";

const app = express();

const server = new McpServer({

name: "enterprise-data-server",

version: "2.0.0"

});

server.tool(

"query_sales_data",

"Query sales data with SQL-like filters",

{

query: { type: "string", description: "Filter expression" },

timeRange: { type: "string", description: "ISO 8601 interval" }

},

{

annotations: {

readOnly: true,

destructive: false,

idempotent: true,

openWorld: false

}

},

async ({ query, timeRange }) => {

const results = await salesDb.query(query, timeRange);

return {

content: [{

type: "text",

text: JSON.stringify(results, null, 2)

}]

};

}

);

const transport = new StreamableHTTPServerTransport({

sessionIdGenerator: () => crypto.randomUUID(),

enableJsonRpcBatching: true

});

app.post("/mcp", async (req, res) => {

await transport.handleRequest(req, res);

});

await server.connect(transport);

app.listen(3001);Batching is valuable when an agent needs several independent reads before it can decide what to do next.

[

{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/call",

"params": {

"name": "query_sales_data",

"arguments": { "query": "region=EMEA", "timeRange": "2026-01/2026-03" }

}

},

{

"jsonrpc": "2.0",

"id": 2,

"method": "tools/call",

"params": {

"name": "query_inventory",

"arguments": { "warehouse": "EU-CENTRAL", "status": "low_stock" }

}

},

{

"jsonrpc": "2.0",

"id": 3,

"method": "tools/call",

"params": {

"name": "get_forecast",

"arguments": { "region": "EMEA", "horizon": "Q2_2026" }

}

}

]In practice, the benefit depends on three things:

Batching is most useful for read-heavy context gathering, not for state-changing workflows that need strict sequencing.

Long-running tools benefit from progress notifications because they reduce user uncertainty and make timeouts easier to manage.

server.tool(

"generate_quarterly_report",

"Generate comprehensive quarterly financial report",

{ quarter: { type: "string" }, includeForecasts: { type: "boolean" } },

{

annotations: { readOnly: true, destructive: false, idempotent: true, openWorld: false }

},

async ({ quarter, includeForecasts }, { sendNotification }) => {

await sendNotification({

method: "notifications/progress",

params: { progress: 0, total: 4, message: "Fetching revenue data..." }

});

const revenue = await fetchRevenueData(quarter);

await sendNotification({

method: "notifications/progress",

params: { progress: 1, total: 4, message: "Computing margins..." }

});

const margins = computeMargins(revenue);

return {

content: [{ type: "text", text: formatReport(revenue, margins) }]

};

}

);TL;DR: The most practical enterprise pattern is one MCP server per business domain, acting as a translation and policy layer in front of existing APIs and systems.

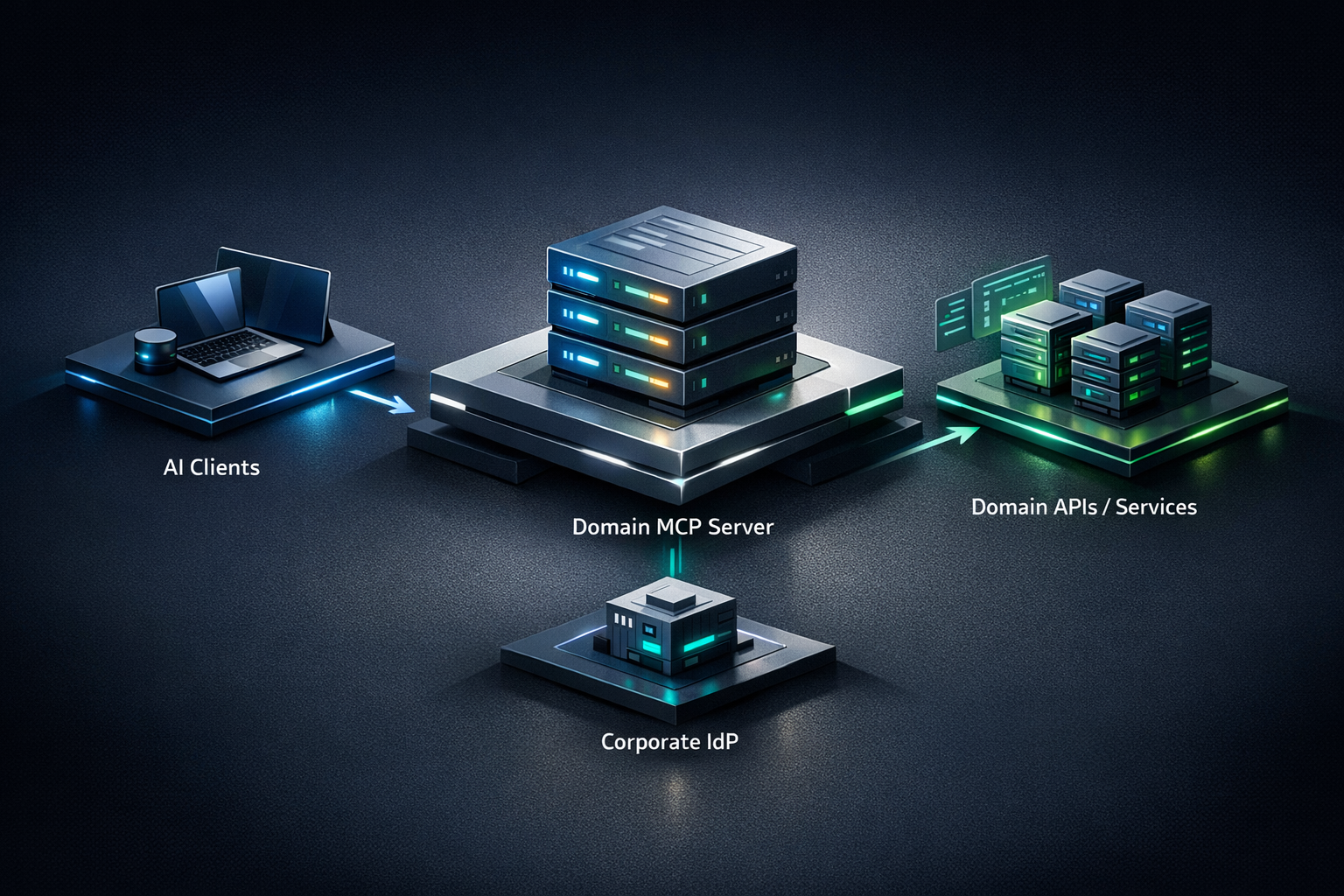

The strongest architectural idea in this article is not tied to any one vendor or launch claim: MCP servers work best as domain gateways, not as monoliths and not as thin wrappers around every individual endpoint.

The MCP server should usually do five things:

That approach lets you reuse existing business logic instead of rewriting it inside the MCP layer.

A finance-domain MCP server might expose tools like these:

server.tool(

"get_budget_variance",

"Retrieve budget variance for a cost center",

schema,

{ annotations: { readOnly: true, idempotent: true, destructive: false, openWorld: false } },

handler

);

server.tool(

"approve_purchase_order",

"Approve a pending purchase order",

schema,

{ annotations: { readOnly: false, idempotent: false, destructive: false, openWorld: true } },

handler

);

server.tool(

"void_invoice",

"Void an invoice according to finance policy",

schema,

{ annotations: { readOnly: false, idempotent: true, destructive: true, openWorld: false } },

handler

);The annotations communicate operational semantics to clients and gateways. That makes policy enforcement more reliable than relying on naming conventions alone.

TL;DR: MCP 2.0 looks promising for enterprise adoption, but teams should separate protocol value from hype by validating client support, SDK maturity, and migration cost before committing.

Several ecosystem claims in the original draft were too recent or too specific to verify confidently, including exact vendor implementation timelines, the number of committed tools, and assertions about the first production deployment. Those may prove true, but they should not be presented as settled fact without public confirmation.

What can be said with confidence is this: MCP becomes more attractive when mainstream clients, IDEs, and agent platforms converge on the same transport and auth expectations. That interoperability is the real adoption driver.

| Factor | Start Now | Wait | Skip MCP |

|---|---|---|---|

| You have multiple internal systems agents need to access | ✅ | — | — |

| You need a standard tool interface across clients | ✅ | — | — |

| You already rely on AI assistants in engineering workflows | ✅ | — | — |

| Your auth and policy model is not ready | — | ✅ | — |

| Your use case is simple and single-system | — | — | ✅ |

| You have no clear agent strategy yet | — | ✅ | — |

A phased migration is still the right approach.

Phase 1: Transport

Replace older remote transport patterns with Streamable HTTP.

// Before

import { SSEServerTransport } from "@modelcontextprotocol/sdk/server/sse";

const transport = new SSEServerTransport("/messages", res);

// After

import { StreamableHTTPServerTransport } from "@modelcontextprotocol/sdk/server/streamableHttp";

const transport = new StreamableHTTPServerTransport({

sessionIdGenerator: () => crypto.randomUUID(),

enableJsonRpcBatching: true

});Phase 2: Tool annotations

Audit every tool and classify it correctly.

// Audit checklist per tool:

// 1. Does it modify state? -> readOnly: false

// 2. Can it destroy data? -> destructive: true

// 3. Is calling it twice safe? -> idempotent: true/false

// 4. Does it access external systems? -> openWorld: truePhase 3: Authorization

Integrate with your identity provider and validate the exact MCP 2.0 auth requirements before rollout.

Phase 4: Batching optimization

Enable batching only where concurrency is safe and useful.

Phase 5: Streaming upgrades

Add progress notifications for long-running tools where UX or timeout behavior justifies the extra complexity.

Compatibility depends on which part of MCP the client uses. Local stdio-based integrations may continue to work with minimal change, while remote clients built around older SSE patterns will likely need updates. In practice, teams should test client-by-client rather than assume blanket backward compatibility.

No. Annotations are metadata, not enforcement. They help clients and gateways make better decisions, but the actual policy behavior depends on the client, gateway, or orchestration layer consuming them.

Batching helps most when an agent needs several independent read operations across network boundaries before it can reason or respond. It helps less when calls are sequentially dependent or when the bottleneck is the downstream system rather than HTTP overhead.

Usually no. One server per bounded business domain is the better default. That keeps ownership, authorization, and tool design aligned with how enterprise teams already organize systems and responsibilities.

For non-interactive workloads, use a machine-to-machine pattern supported by your identity platform and validate that it aligns with the current MCP 2.0 authorization model. Avoid bypassing centralized identity just because a workflow is automated.

MCP 2.0 looks like a meaningful step toward making MCP viable as enterprise integration infrastructure rather than just a developer convenience layer. The protocol direction is promising, but implementation discipline matters more than enthusiasm: validate the current spec, confirm SDK behavior, classify tools carefully, and integrate with your existing identity and policy stack.

If your team is evaluating MCP 2.0 or planning a migration path, Elegant Software Solutions can help you design the right domain boundaries, authorization model, and rollout strategy for production use. Schedule a technical consultation to discuss your MCP roadmap.

Discover more content: