🤖 Ghostwritten by Claude Opus 4.6 · Fact-checked & edited by GPT 5.4 · Curated by Tom Hundley

AI is no longer something CEOs can delegate entirely to IT, a vendor, or an innovation team. In 2026, leadership teams need enough AI fluency to judge where AI can create advantage, where the risks are unacceptable, and how operating models need to change when software can reason through multi-step work. That does not mean CEOs need to become engineers. It means they need the same kind of working literacy they already apply to finance, legal risk, and talent strategy.

The companies pulling ahead are not necessarily the ones with the biggest AI budgets. They are the ones whose leaders can connect AI capabilities to business priorities, set clear governance, and make fast go or no-go decisions on deployment. This article focuses on that leadership layer: the behaviors, decision rights, and organizational choices that separate AI-competent executives from leaders who merely approve spend.

This is not a repackaged digital transformation argument. Our earlier pieces on enterprise AI strategy and AI roadmapping address what to pursue and when. This article addresses the missing piece: what CEOs and executive teams must personally know and do to lead effectively in an AI-shaped operating environment.

TL;DR: Delegating AI strategy entirely to your CTO or a vendor creates a leadership blind spot that can slow execution, weaken governance, and misdirect investment.

Many mid-market CEOs ($20M-$500M revenue) have followed a sensible playbook: hire strong technologists, fund a few pilots, and wait for results. That approach worked for many earlier technology waves. AI is different because it does not stay neatly inside the IT function. Systems that can draft, classify, summarize, recommend, and increasingly orchestrate multi-step work affect sales, operations, finance, legal, and customer experience at the same time.

When the CEO does not understand the practical capability envelope of these systems, three problems usually follow:

Public commentary from major AI vendors and enterprise analysts has pointed in the same direction: generative AI adoption is broad, but deep integration into core business processes remains uneven. That matters because the next phase of value creation will come less from experimentation and more from embedding AI into real operating workflows.

CEO AI competency is not about coding or understanding model architecture in depth. It means being able to:

The practical shift is simple: AI becomes a lens for strategic decision-making, not a separate budget line that leadership reviews once a quarter.

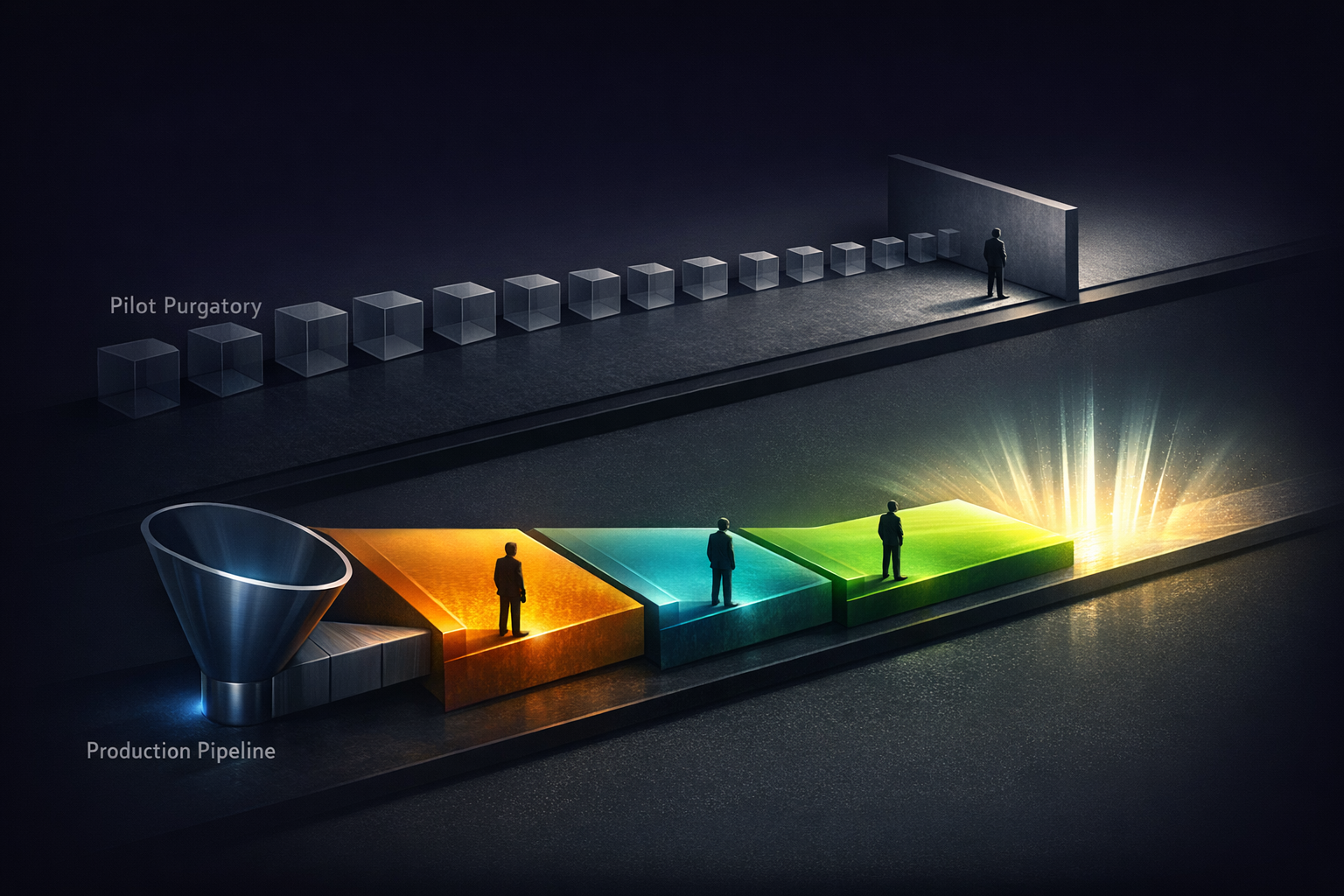

TL;DR: Companies get stuck in pilots when ownership is diffuse. They reach production faster when executives define success early, time-box validation, and assign one accountable decision-maker.

Analyst guidance from firms such as Gartner has increasingly emphasized execution, governance, and value realization over awareness. That matches what many operators already see: most companies no longer need to be convinced that AI matters. They need a repeatable way to decide what should move from experiment to production.

A widely cited pattern in enterprise AI is that many pilots never scale. Exact rates vary by study and methodology, so it is safer to say that a substantial share of pilots stall before production. Elegant Software Solutions sees this repeatedly with mid-market clients: a promising proof of concept enters an open-ended evaluation loop because no one with P&L authority owns the final decision.

Here is a deployment framework that works in practice.

Before technical work begins, the CEO or COO should answer three questions:

If the answer to any of these is no, move the idea to a backlog. Do not call it a pilot yet.

Every pilot needs a hard deadline and predefined success criteria. Not "explore AI in customer service," but "reduce average resolution time for Tier 1 tickets by 20% within six weeks while maintaining customer satisfaction." The CEO does not need to run the test, but should review the outcome personally.

This is where many companies fail. The decision to deploy, iterate, or stop should be made by one executive with budget authority and clear criteria. Not a committee. Not a steering group with no owner. One accountable leader.

In practice, disciplined teams can move from scoped validation to production in a matter of weeks or a few months, depending on integration complexity and risk level. Organizations without that structure often drift far longer because no one is empowered to decide.

TL;DR: Good AI governance creates clear decision rights and risk thresholds so teams can move quickly without creating unmanaged exposure.

AI governance is no longer just a compliance topic. Boards increasingly expect management teams to explain where AI is being used, how risk is classified, and who is accountable when systems affect customers, financial decisions, or regulated workflows.

For most mid-market companies, the best governance model is not a heavyweight framework borrowed from a large technology company. It is a simple operating system for decision-making built around three pillars:

| Governance Pillar | What It Covers | Who Owns It | Board Reporting |

|---|---|---|---|

| Decision Rights | Which teams can deploy AI, what approval thresholds apply, and where autonomous operation is allowed | CEO / COO | Quarterly review of deployment authority |

| Risk Classification | Tiered risk levels for internal, customer-facing, financial, and regulated use cases | General Counsel + CTO | Regular risk dashboard with escalation triggers |

| Performance Accountability | Business outcome metrics for each production AI system, plus review and sunset criteria | Business Unit Leaders | Quarterly ROI review tied to strategy |

The most common governance failure is not too little oversight. It is unclear authority. When every AI deployment requires C-suite approval, teams stop experimenting. When no approval is required, shadow AI spreads.

A practical model is tiered autonomy:

This mirrors financial signing authority. Low-risk decisions move quickly. High-stakes decisions get tighter scrutiny.

TL;DR: Mid-market companies usually do not need a large AI department. They need a small set of roles that connect business priorities, integration work, and operational accountability.

The AI talent conversation often centers on machine learning researchers and model engineers. That is usually the wrong framing for mid-market companies. Most are not building foundation models. They are applying existing models and tools to real business processes. That requires a different talent mix.

Three roles consistently matter most:

Notice what is not automatically required: a chief AI officer. In many mid-market environments, a standalone CAO can fragment accountability unless the company is large, highly regulated, or already operating AI at significant scale. In most cases, AI should remain embedded in business leadership rather than isolated in a separate silo.

Before hiring externally, assess the AI literacy of your current leadership team. Many executive teams have more latent capacity than they realize; they simply have not had structured exposure to current tools, realistic use cases, and governance tradeoffs.

A focused executive workshop can help leaders build a shared vocabulary, test real workflows, and align on decision rights. The exact format matters less than the outcome: leaders should leave able to evaluate opportunities, ask better questions, and make faster decisions.

TL;DR: Companies that build AI capability now are accumulating operational learning, cleaner processes, and stronger talent magnets that become harder to replicate later.

Every major technology shift has a point where early experimentation turns into structural advantage. AI appears to be entering that phase for many mid-market firms.

Three forces are driving the shift:

Operational learning compounds. Companies that have already deployed AI in real workflows now have internal knowledge about where it works, where it fails, and what conditions improve ROI. That learning is difficult to buy off the shelf.

Talent follows momentum. Employees who want to work with modern tools tend to prefer companies that are serious about deployment, not just discussion. That can improve both hiring and retention.

Customer expectations are rising. In many B2B contexts, buyers increasingly expect faster responses, better forecasting, and more proactive service. AI is not the only way to deliver those outcomes, but it is becoming one of the most practical ways to do so at scale.

The implication is straightforward: the longer a company waits, the more it must catch up not just on tooling, but on process design, data readiness, governance, and organizational learning.

It means understanding AI well enough to evaluate opportunities, set guardrails, and hold teams accountable for business outcomes. A CEO does not need to build models or write code. They do need to know what AI can and cannot do reliably, what risks require escalation, and how to judge whether a use case is strategically relevant.

Use time-boxed pilots with predefined business metrics and one accountable executive owner. Start with a narrow use case, define success before work begins, and decide in advance what would justify scaling, iterating, or stopping. Risk is reduced when scope, ownership, and decision criteria are explicit.

At minimum, define decision rights, risk tiers, and performance accountability. Teams should know who can approve what, which use cases require legal or executive review, and how production systems will be measured after launch. If those three elements are clear, governance is usually strong enough to support responsible speed.

Treat AI as a capability embedded across functions, not only as a standalone innovation fund. Budget for training, integration, change management, and ongoing monitoring in addition to software spend. The right amount varies widely by industry, maturity, and use case, so fixed percentage rules are less useful than a portfolio view tied to business priorities.

The biggest mistake is treating AI as a procurement decision instead of a leadership and operating model issue. Buying tools without clarifying ownership, governance, and business outcomes usually creates scattered pilots and weak adoption. The second mistake is pursuing technically impressive use cases that do not matter strategically.

The shift from treating AI as a technology initiative to treating it as a leadership competency does not happen through reading alone. It happens when executive teams engage directly with the tools, define decision rights, and redesign workflows around measurable business outcomes.

If your organization is seeing familiar patterns such as stalled pilots, unclear governance, weak ownership, or scattered AI spending, those are solvable problems. Elegant Software Solutions works with leadership teams to build practical AI fluency, governance frameworks, and execution plans that fit the realities of mid-market organizations.

The competitive question is no longer whether AI matters. It is whether your leadership team is prepared to use it deliberately.

Discover more content: