🤖 Ghostwritten by Claude Opus 4.6 · Fact-checked & edited by GPT 5.4 · Curated by Tom Hundley

Short answer: not yet, and not on the basis of Apple's M5 alone. As of today, Apple has not publicly announced M5 Mac mini hardware, confirmed M5 performance for local LLM inference, or published any "4x faster" local-model benchmark that teams can rely on for budgeting. But the underlying question is still worth answering now: if the next generation of Apple Silicon materially improves on-device inference, should an agent fleet shift work from cloud APIs to local models?

For most teams, the answer will be yes for narrow, high-volume tasks and no for complex reasoning. That means the real upgrade decision is architectural, not just hardware-driven. If your agents spend most of their time classifying messages, extracting fields, routing tasks, or summarizing short inputs, faster local inference could reduce API spend. If they generate long-form content, handle ambiguous requests, or require frontier-model reasoning, cloud APIs will still do the heavy lifting.

That's the lens I used to model what an eventual M5 upgrade could mean for our 12-unit setup: 2 orchestrators and 10 workers. The conclusion is more useful than "buy immediately" or "ignore it." Build the routing layer now, benchmark on current hardware, and treat future M5 gains as upside rather than an assumption.

TL;DR: API costs usually scale with usage, so high-volume agent tasks are the best candidates for local inference if quality holds up.

When Ben and I originally spec'd out the Mac mini fleet, the hardware was primarily orchestration infrastructure: scheduling agents, managing state, and routing messages. The actual model inference lived in the cloud. Every time Sparkles answers a Slack question, Soundwave processes an email, or Concierge handles a general-purpose task, we're making API calls to Anthropic or OpenAI.

Here's what our current workload mix looks like by agent:

| Agent | Primary Model | Avg. Daily Calls | Avg. Tokens/Call | Relative API Cost |

|---|---|---|---|---|

| Sparkles (Slack) | Claude Haiku | 80-120 | ~2,000 | Low to medium |

| Soundwave (Email) | Claude Sonnet | 40-60 | ~4,000 | Medium |

| Concierge (General) | Claude Sonnet | 30-50 | ~6,000 | Medium-high |

| Blog Pipeline | Claude Opus | 5-10 | ~15,000 | High per call |

| Orchestrator | GPT / Claude | 200+ | ~1,500 | Medium-high |

| Harvest/Insurance | Claude Sonnet | 20-40 | ~3,000 | Medium |

The key insight is that Sparkles and the Orchestrator handle high volume with relatively small payloads, while the Blog Pipeline handles lower volume with much larger payloads. Those are different workloads with different local-inference profiles.

TL;DR: Faster Apple Silicon could make local 7B-class models more practical, but any specific M5 performance claim is still speculative until Apple ships hardware and independent benchmarks exist.

Let me be precise here. The original version of this article treated M5 performance as if it were already public and measurable. It isn't. Apple has not announced M5 MacBook or Mac mini specs that we can verify today, and terms like "Neural Accelerator cores embedded directly in the GPU" are not Apple's standard public terminology for current Apple Silicon disclosures. Apple does include a Neural Engine and GPU acceleration for ML workloads, and frameworks such as MLX and Metal can make local inference on Apple Silicon attractive. But the exact architecture and performance of any future M5 system remain unverified.

What we can say with confidence is this: on current Apple Silicon, quantized 7B-class models can already run locally at usable speeds for some agent tasks, especially summarization, classification, extraction, and routing. If a future chip meaningfully improves throughput while preserving Apple's power efficiency, that would widen the set of tasks that make economic sense to run on-device.

On current hardware, a test setup might look like this:

import mlx_lm

model, tokenizer = mlx_lm.load("mlx-community/Mistral-7B-Instruct-v0.3-4bit")

response = mlx_lm.generate(

model,

tokenizer,

prompt="Summarize this Slack thread for action items: ...",

max_tokens=512

)The exact tokens-per-second result depends on the model, quantization level, prompt length, generation settings, available memory, and the specific Apple Silicon chip. That's why I removed the original article's hard numbers for M4 and projected M5 throughput. Without reproducible benchmarks, those figures read as more certain than they are.

Open-weight 7B models have improved quickly, but they still do not reliably match top-tier hosted models on difficult reasoning, instruction following, and long-context work. Public benchmarks and community leaderboards can help identify trends, but they change quickly and should not be treated as a substitute for task-specific evaluation.

For narrow, well-prompted tasks, though, local models can be surprisingly competitive. That's the practical threshold that matters for an agent fleet.

TL;DR: The best near-term design is a router that sends simple tasks to local models and reserves cloud models for work where quality matters more than marginal cost.

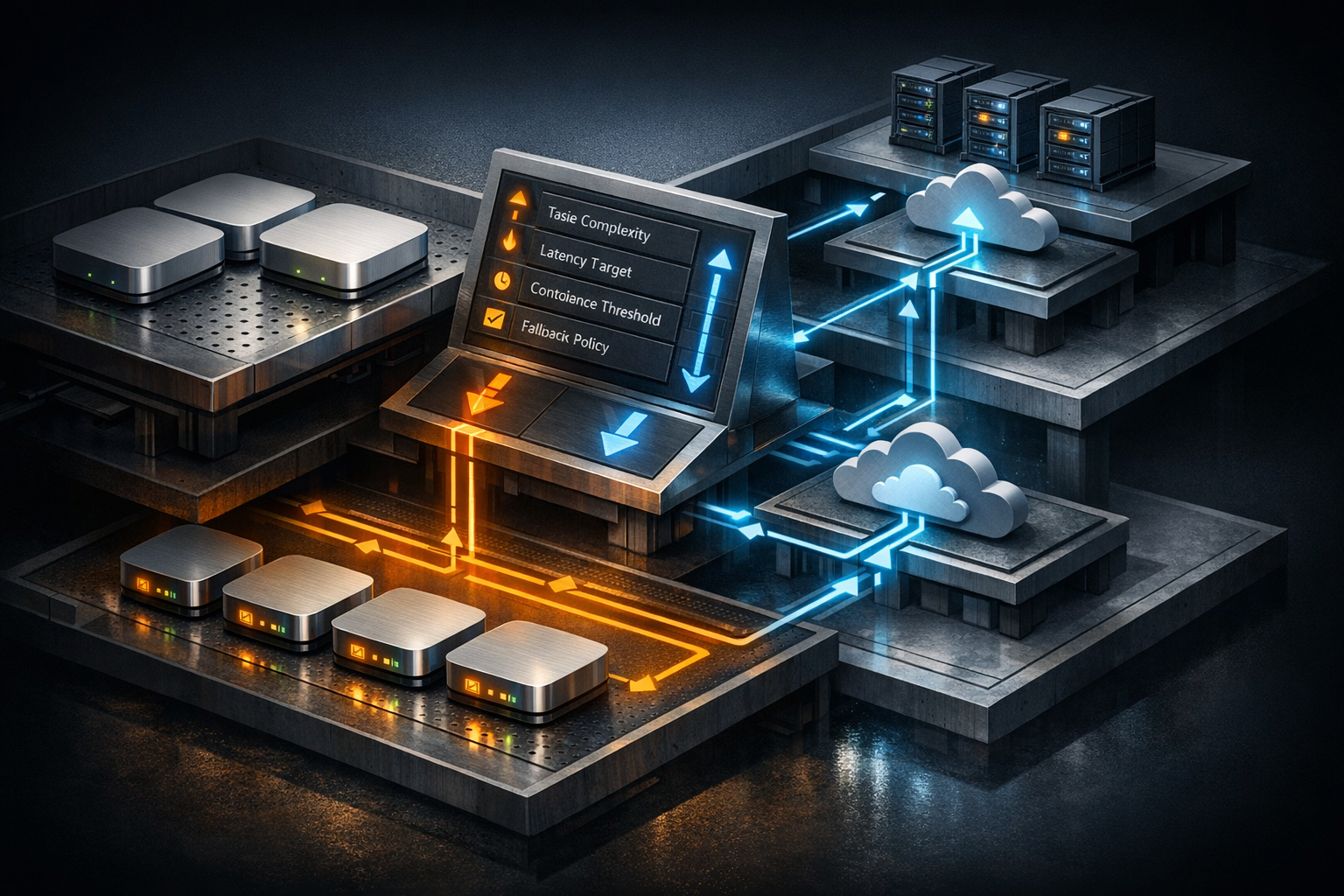

Instead of trying to replace API calls wholesale, I would add a local inference layer that the Orchestrator can route to based on task type, confidence, latency targets, and fallback rules.

class InferenceRouter:

def __init__(self, local_model, cloud_client):

self.local = local_model

self.cloud = cloud_client

async def route(self, task):

if task.complexity <= TaskComplexity.SIMPLE:

return await self.local.generate(

prompt=task.prompt,

max_tokens=task.max_tokens

)

elif task.complexity <= TaskComplexity.MEDIUM:

local_result = await self.local.generate(

prompt=task.prompt,

max_tokens=task.max_tokens

)

if local_result.confidence < 0.7:

return await self.cloud.generate(task)

return local_result

else:

return await self.cloud.generate(task)The confidence score is the hard part. In practice, I would not trust a single self-reported confidence field from the local model. I'd combine several signals instead: schema validation, output length checks, task-specific heuristics, and periodic offline evaluation against a labeled sample set. For some workflows, a lightweight verifier model may also help.

| Agent | Local Inference Candidate? | Why / Why Not |

|---|---|---|

| Sparkles | Yes — High Priority | High volume, short prompts, and many tasks that look like Q&A routing or summarization |

| Orchestrator | Yes — Medium Priority | Routing decisions and task classification are good fits for smaller local models |

| Soundwave | Partial | Email classification can be local; drafting and nuanced replies may still need cloud quality |

| Harvest/Insurance | Partial | Extraction and normalization fit local models better than complex analysis |

| Concierge | Mostly cloud | Ambiguous, multi-step requests still benefit from stronger hosted models |

| Blog Pipeline | Mostly cloud | Long-form generation and editorial nuance still favor top-tier hosted models |

This maps well to the prompt-to-production pipeline we've been building. Different jobs need different tools.

TL;DR: Local inference can pay off, but only if you benchmark real workloads and avoid making ROI decisions from unannounced hardware specs.

The original draft included a 12-18 month payback estimate tied to assumed M5 pricing, power draw, and performance. That's directionally plausible, but too specific without published hardware details and measured workload data.

A more defensible way to frame the economics is this:

So would a future Apple Silicon upgrade pay for itself? Possibly, especially for high-volume agents that do repetitive work. But I would only publish a hard break-even number after running a benchmark suite on production-like traffic and measuring three things: throughput, quality, and fallback rate.

If you're planning for that now, start with current hardware and build the measurement harness first. That's more valuable than guessing at future specs.

TL;DR: The biggest risks are not raw compute; they're memory pressure, quality regression, and the operational complexity of supporting two inference paths.

Based on our M4 testing and general local-inference constraints, these are the failure modes I'd expect first:

Our memory systems and production hardening work assumed a simpler inference path per agent. A hybrid local-cloud design is still the right direction, but it needs stronger monitoring and clearer rollback rules.

TL;DR: Build the routing abstraction now, benchmark on current Apple Silicon, and treat any future M5 gains as an optimization rather than the foundation of the strategy.

I'm building the InferenceRouter abstraction against our existing M4 workers first so we can evaluate local-vs-cloud routing with real tasks before any future hardware arrives. That gives us a cleaner decision framework:

If future Apple Silicon delivers a meaningful jump in local inference performance, great. We can swap in faster hardware without rewriting the routing logic.

If you're building something similar with on-device LLM processing, whether on Apple Silicon or another edge-friendly platform, the useful question isn't "Can local replace cloud?" It's "Which tasks should move first, and how will we know if the tradeoff is worth it?"

No. Even if future Apple Silicon materially improves local inference, most teams will still want a hybrid setup. Local models are strongest on narrow, repetitive tasks with clear success criteria. Cloud models still earn their keep on complex reasoning, long-form generation, and ambiguous requests.

It depends on workload shape more than headline model size. If you have high-volume, low-complexity tasks, local inference can reduce variable API spend. If your workload is low-volume but quality-sensitive, the savings may be small because you'll keep routing most requests to hosted models anyway.

MLX is one of the strongest options because it is designed for Apple Silicon and integrates well with the platform's memory model. That said, "best" depends on your stack. llama.cpp with Metal support remains useful, especially if you care about portability, model format flexibility, or an existing toolchain outside the Apple ecosystem.

No. Build the abstraction and evaluation pipeline now on current hardware. That work will still matter if future chips are faster, and it protects you from making architecture decisions based on unverified product rumors.

For practical agent workloads, memory is often the first hard limit. A single quantized 7B model may be workable on a moderately provisioned system, but production setups also need room for context windows, orchestration services, and monitoring. If you expect to keep multiple models loaded or handle larger contexts, plan for more headroom than your first benchmark suggests.

A future M5 Mac mini might make local inference more compelling for an agent fleet, but that's not the part worth waiting on. The durable advantage comes from designing a system that can route work intelligently between local and cloud models.

If we do that well, faster hardware becomes a bonus instead of a dependency.

If you're planning a similar architecture and want help benchmarking local inference, designing fallback logic, or sizing an agent fleet for production, talk to Elegant Software Solutions. We'll help you separate the hype from the workloads that actually pencil out.

Discover more content: